You Cannot Manage a Community You Do Not Understand

Running a server by feel works until it doesn't. Here is what data-informed community management actually looks like.

Running on intuition

There is a version of community management that most operators default to, especially early on. You spend time in the server. You develop a feel for what members care about. You plan content and events based on what has worked before, and you respond to what seems urgent in the moment. This approach has real strengths. It keeps the human element present. It allows for flexibility. When something interesting comes up organically in a conversation, you can build on it in real time. But it has a structural limitation. What you observe by being present in a community is not the same as what is actually happening across the community at scale. You notice the conversations you happened to see. You remember the questions that stood out to you personally. You feel good about the posts that got responses and forget the ones that did not. The result is community management built on a selective and often incomplete picture of what members are actually experiencing.

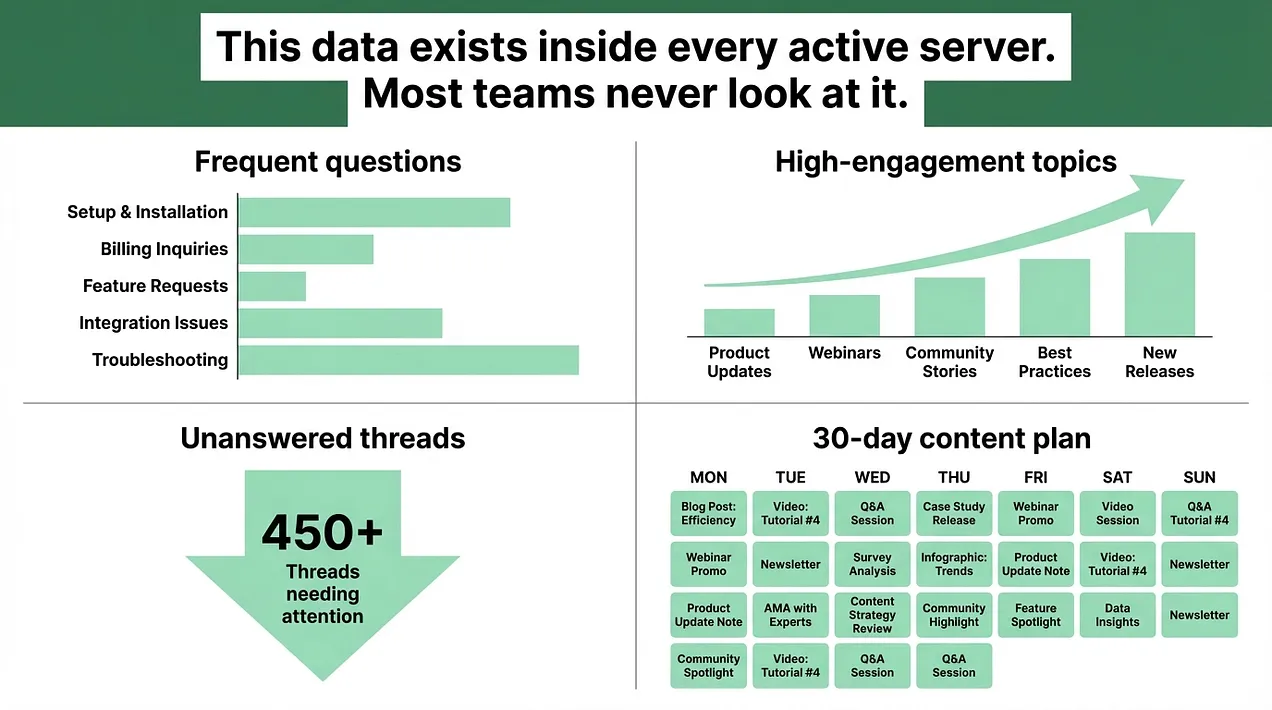

What the data actually shows

I was on a call with someone building a community around a reward-based platform. They had thoughtful goals for what the community should accomplish and a genuine investment in making it work. What they did not have was a systematic view of what their members were actually talking about, asking about, and engaging with. This gap is more common than it should be. Community managers spend real time inside their servers, but without a structured way to track conversation patterns, they are making decisions based on impression rather than information. The conversations happening in your community contain specific, actionable data. Which questions appear repeatedly in different forms across different channels. Which topics consistently generate discussion versus which ones are met with silence. Which channels are reliably active and which are gradually losing engagement. Which parts of the onboarding process produce the most confused follow-up questions from new members. All of that information lives inside the server. Most teams never systematically extract it.

Building tools to understand your community

I have built custom tools to analyze community conversations specifically because this data is consistently underused. The reason is practical. The specific answers to the questions above directly affect operational decisions. They change what goes into a content calendar, how support channels are structured, and where onboarding documentation needs to provide more clarity. A content calendar built on what you know your members actually ask about and engage with is fundamentally different from one built on what you assume they care about. You are planning around documented conversation patterns rather than guesses. The content that comes out of that process lands more consistently, because it is responding to what members have already shown they want to talk about. A support FAQ built on the actual questions that repeat every week covers what members genuinely need answered rather than what the team thinks they probably ask. When the FAQ is accurate, the volume of repetitive questions the team handles drops, because the system is serving the real needs rather than assumed ones. An onboarding flow built on data about where new members get confused or ask for clarification is more effective than one built on what feels intuitive to the person who designed it. You are filling the actual gaps rather than the ones you imagined.

The 30-day content calendar as a data output

One concrete application of this approach is the content calendar. Most community managers build their calendars by looking at what they have done before, what feels relevant in the current moment, or what leadership has asked them to promote. A calendar built on conversation data starts from a different place. You look at what members have been asking about in the past few weeks. You identify which topics consistently generate responses. You note which areas have gone quiet and might benefit from intentional prompts. You schedule based on what you know generates engagement, not what you hope will. This does not mean every piece of content is reactive. Planned announcements, events, and product updates have a place in any content calendar. But the conversational content that fills the space between those structured posts lands better when it is based on what members have already shown they want to discuss.

What community engagement analytics actually requires

The shift from intuition-based to data-informed community management does not always require custom-built analytics tools. It starts with structured observation. Set aside time each week to look at which conversations received responses and which did not. Note which questions are appearing for the second or third time. Track which channels are trending up or down in activity levels. Document what you find. Over four to six weeks, patterns become clear and consistent. A question that appeared three times in the same week is a content gap. A channel that has gone quiet for two consecutive weeks is an architecture question worth examining. A topic that reliably generates ten replies is a signal about what your audience wants to talk about. Those patterns become the foundation for a content strategy, a FAQ update, a support system improvement, or a channel architecture change. None of it requires guessing.

The gap between the community you think you have and the one you actually have

The most consequential version of the problem shows up in member retention. Members who disengage from a community often do so quietly. They stop posting. They stop responding. They stop attending events. And they rarely explain why. The community manager sees declining engagement numbers without always knowing which specific gaps in the experience caused the decline. Was it the lack of relevant content? The unanswered questions that made members feel their contributions were not valued? The onboarding experience that left them uncertain about where they fit? All of these questions have answers that live inside the server's conversation history. The teams that build the habit of looking at that data regularly identify the problems while they are still recoverable. The teams that run on intuition alone tend to notice after the damage is done. Managing a community you do not understand is a risk that compounds quietly. The gap between what you think is happening and what is actually happening is where your most engaged potential members stop engaging. Closing that gap is an operational decision, not a creative one.

*Daniel Jeong is a Discord infrastructure consultant helping companies build scalable community systems that function as core business assets. To learn more, visit *danieljeong.org https://danieljeong.org