Moderation Is Not Punishment. It Is Reputation Infrastructure.

Every unaddressed comment in your Discord is a message to every new member who reads it. Here is what that costs you.

Moderation gets treated as a reactive function in most Discord communities. Someone posts something bad. Someone on the team sees it. Someone responds or removes it. The problem is handled.

That is not a moderation system. That is damage control. The distinction matters because damage control only works when someone is watching, and communities run around the clock.

Moderation gets treated as a reactive function in most Discord communities. Someone posts something bad. Someone on the team sees it. Someone responds or removes it. The problem is handled.

That is not a moderation system. That is damage control. The distinction matters because damage control only works when someone is watching, and communities run around the clock.

What New Members Actually See

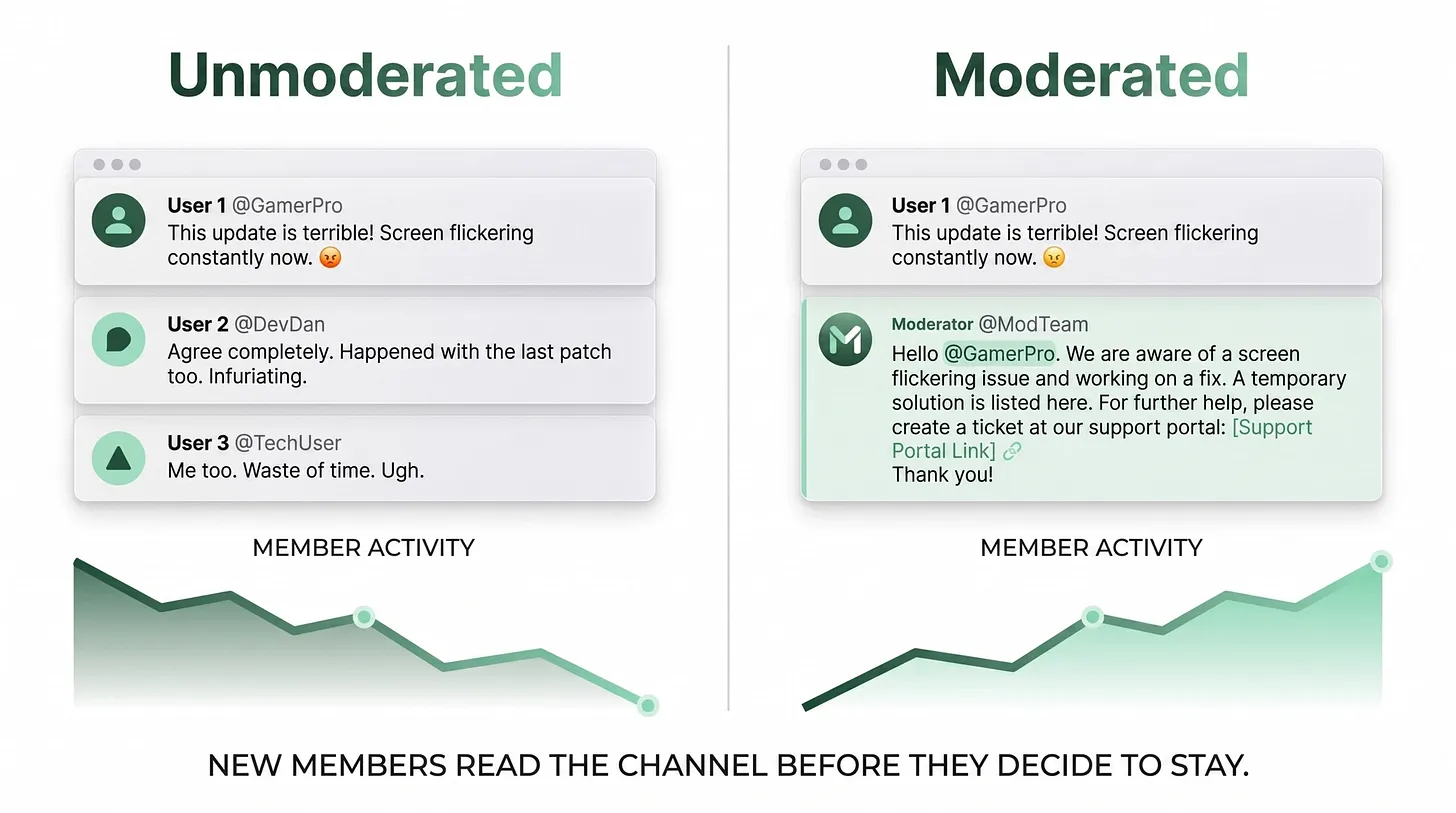

Before a new member decides to engage with a Discord community, they read it. Usually for five to fifteen minutes. They scroll through the public channels. They form an impression of whether this is a place worth their time. During that reading period, the moderation state of your server is visible. A channel with unaddressed complaints, escalating negativity, and zero staff presence reads as a server that is not worth investing in. Not because the negativity is overwhelming, but because the absence of a response signals that nobody in charge is paying attention. Inverted logic applies when moderation is working. A channel where complaints are addressed quickly, where the tone is generally constructive, and where the staff voice is visible and helpful reads as a place that is professionally run. That impression influences whether the new member stays long enough to find value. In most servers, nobody is thinking about the new member's reading experience. The team is focused on the active conversation, not on what the channel looks like to someone arriving for the first time.

The Sentiment Spiral

Unmoderated Discord communities have a consistent pattern. A few vocal negative members establish a tone. Other members who might be positive become reluctant to post because the environment feels hostile. The negative members fill the silence. The tone gets worse. Eventually new members arrive, see the tone, and leave. The positive members who stayed stop checking in. The channel belongs to the negative members by default. This spiral moves faster than most teams expect. A server that felt reasonably healthy three weeks ago can look significantly different after a few weeks of unaddressed escalation. I have worked on servers in this state. The consistent finding is that the negative members are usually a small number of people. In most cases, two to five accounts are responsible for the majority of the negative content. But because the volume is high relative to the constructive content, the perception is that the whole community has turned. Moderation infrastructure interrupts the spiral early, before it becomes visible to new arrivals.

What Infrastructure-Level Moderation Actually Requires

There are four components to moderation infrastructure that actually works at scale. The first is automated phrase detection. Certain types of negative content are predictable. Product quality complaints, calls to not purchase, brand criticism — these follow patterns. Automated detection can flag or remove these posts and simultaneously open a private thread with the member. The private thread allows the team to address the concern directly without the complaint sitting in a public channel building an audience. This is not about silencing legitimate criticism. It is about routing it to the right place. A member with a real quality concern deserves a real response. That response happens more effectively in a private thread than in a public channel where other frustrated members can pile on. The second component is scheduled rule reminders. Most servers post rules once in a pinned message and assume members read them. They do not. Regular visible reminders of community standards, posted during high-traffic hours, accomplish two things. They remind existing members of the behavioral expectations. They signal to new members that the rules are enforced, not decorative. The third component is pre-built response frameworks for common issues. Product quality complaints. Shipping delays. Brand controversies. Anything that comes up repeatedly should have a documented response that any team member can adapt and send within minutes. This compresses response time from hours to seconds and guarantees consistency of tone. The fourth component is a staffing model that covers the hours when the server is most active. A moderation system that only operates during business hours covers roughly a third of the hours when your community is most engaged. Understanding your peak activity hours and ensuring coverage during those hours is a baseline operational requirement.

The Reputation Cost of Inaction

Every hour that a negative comment sits unanswered in a public channel is an hour that new members are reading it and forming their conclusion. The community is a public record of how your brand responds when things are not going perfectly. Companies that respond thoughtfully, consistently, and quickly to community concerns build trust with the people who are watching. Companies that let complaints sit build a different kind of reputation. Moderation infrastructure is not an expense. It is an investment in the quality of the signal your community sends to everyone who reads it before deciding whether to stay.

Daniel Jeong is a Discord infrastructure consultant who helps companies design moderation systems that protect community quality at scale. https://danieljeong.org