Every Discord That Skips Moderation Infrastructure Ends Up With the Same Problem

It is not a behavior issue. It is a structural one.

The Pattern Shows Up Regardless of Size

I have worked with communities that range from a few hundred members to over a million. The ones that struggle with moderation share something in common. Not the same industry, not the same audience, not the same founding team. What they share is a decision that was made early on, usually without much deliberation: moderation was something they would handle when it became necessary. The problem with that logic is that by the time moderation becomes visibly necessary, the culture is already in motion. The early members who experienced the unstructured environment have already set the tone for how the server feels to new people. The members who found it uncomfortable have already left. The ones who stayed are the ones who either did not notice or did not mind. This is not a hypothetical. It is a pattern I see consistently across server types, company sizes, and industries. And the fix is not stricter rules. The fix is earlier infrastructure.

What Moderation Infrastructure Actually Means

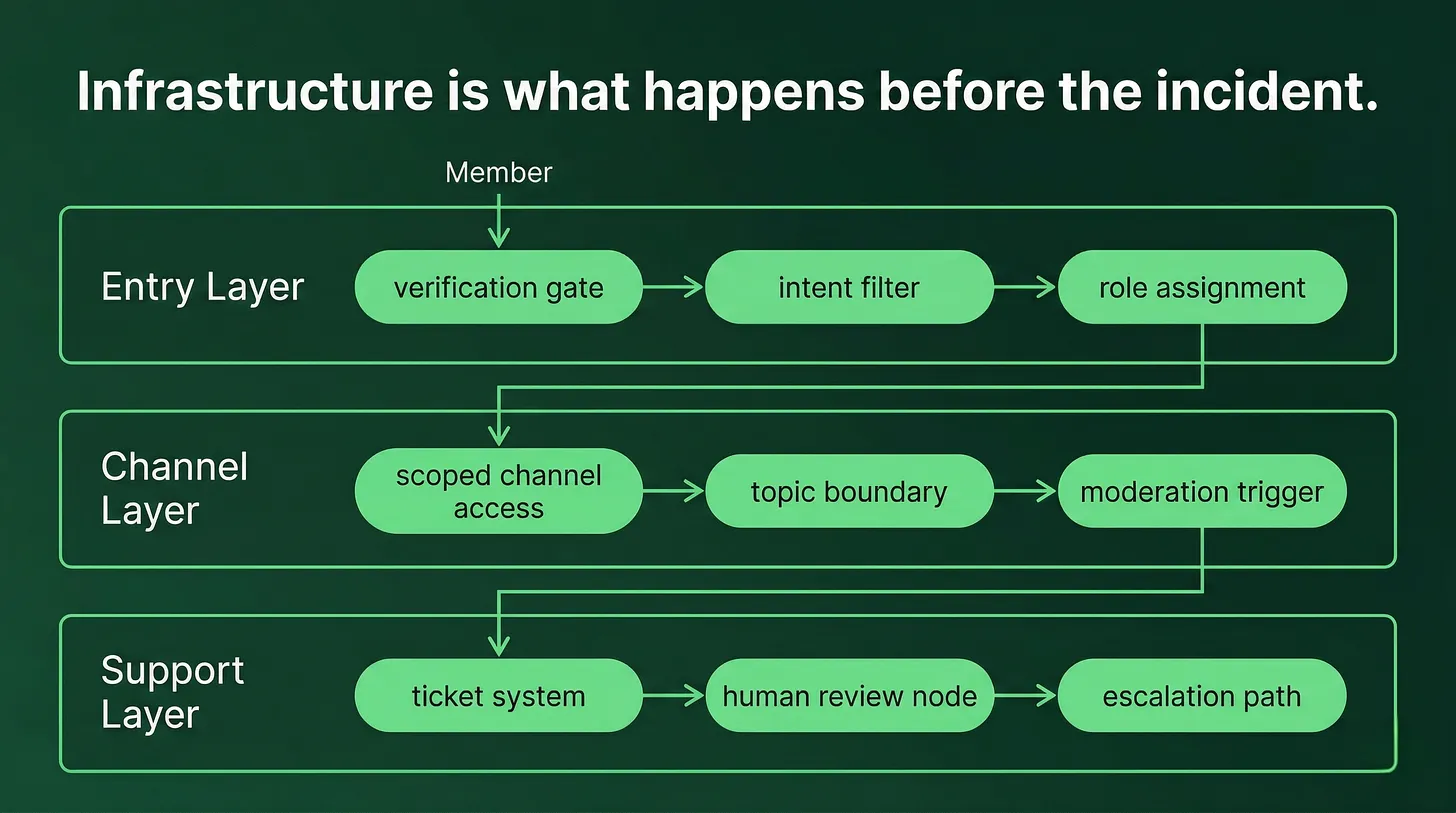

When most community managers think about moderation, they think about rules. A rules channel at the top of the server. A list of things members are not allowed to do. A bot that removes flagged language. That is the visible layer. It is the part that a team can point to and say moderation is handled. The invisible layer is the structure that prevents incidents from occurring in the first place. This is where the actual work lives, and it is where most communities fall short. Entry verification is the first decision point. Who is allowed in, and under what conditions? A server that lets anyone join with no friction collects a population that includes people who are testing limits, people who have been removed from other communities, and people with no genuine connection to the product, topic, or community purpose. Verification does not have to be elaborate. It has to be intentional. A short intake question, a role gate, a message that requires a response before access is granted. The goal is not to exclude people. The goal is to establish that entry requires deliberate action. Channel scoping is the second layer. Every channel carries an implied purpose. When that purpose is vague, conversations drift. When conversations drift, friction follows. A channel called general is an invitation for everything, including the things you did not want. Named channels with visible descriptions and clear topic boundaries reduce ambient noise and give moderators a basis for redirecting members rather than removing them. Role access controls the third layer. New members should not have access to every part of the server on day one. Not because they are suspect, but because context matters. Dropping someone into an advanced discussion they have no frame of reference for is a setup for misunderstanding. Progressive access, earned through participation or tenure, creates a path that mirrors the community structure and reduces the likelihood of disruptive entries into spaces that require shared context. Support routing is the fourth layer. When members have complaints, frustration, or conflict, they need a place to take it that is not a public channel. A server without a clear support pathway forces those conversations into open spaces, which amplifies them and makes the entire community an audience for a problem that should have been handled privately. A ticket system, a DM to a moderation role, a dedicated support channel. The specific mechanism matters less than having one that members know exists. Moderation triggers are the fifth layer. Automated responses to specific behaviors, keyword flags that route messages to a review queue, rate limits on new accounts. These are not replacements for human judgment. They are the early warning system that gives your team the information they need before a situation becomes visible to the full membership. Together, these five layers form the architecture. None of them is especially complex in isolation. Together, they do something rules alone cannot: they shape the environment before behavior occurs, rather than reacting to it after the damage is done.

Why Large Communities Still Get This Wrong

One of the conversations that clarified this for me was with a founder building a community around a consumer technology product. They had looked at other Discord servers in their niche and noticed a consistent problem: the communities became toxic over time, regardless of how well-designed the product was. What they described was accurate and recognizable. Communities without deliberate moderation structure default toward the same failure patterns. Offensive comments in channels meant for product conversation. Long-time members who police newcomers in ways that drive them out rather than welcome them in. Quiet exits by the members who came with the most genuine interest. The company size does not protect you from this outcome. A large company with no moderation infrastructure produces the same result as a ten-person startup with no moderation infrastructure. The scale may make the failure more visible, but the root cause is identical. The reason large communities still get this wrong is often organizational. Moderation is assigned to whoever has bandwidth rather than whoever designed the system. It is treated as reactive triage rather than structural design. The people responsible for it are responding to incidents instead of building the environment that reduces how often those incidents occur. This is why the moderation conversation needs to happen before launch, not after the first incident.

The Signal That Something Is Wrong

There is a reliable early signal that a community's moderation infrastructure is insufficient. It is not the obvious incidents. Those are symptoms. The signal is what new members experience in their first 48 hours. New members who enter a well-structured server find a clear path. They know where to go first, what is expected of them, and what the community is for. Even if they do not engage immediately, they understand the environment and what role they are meant to play in it. New members who enter a poorly structured server find ambiguity. They drop into channels with no context for the existing conversations. They see interactions that do not communicate what the community is actually about. They encounter members who are either ignoring them or engaging in ways that are neither welcoming nor useful. Most of them leave without saying anything. There is no complaint to investigate, no incident to address. They simply do not come back. The communities that retain members effectively are not always the most active ones. They are the ones where new members experience clarity early. Moderation infrastructure is a large part of what creates that clarity, because it shapes the environment that new members walk into before anyone in the community has said a single word to them.

Building It Before You Need It

The practical question to ask before launching a Discord community, or before auditing one that is already running, is not what the rules are. The question is what happens when someone joins. Where do they go first? What do they see in the first ten seconds? What action does the server structure expect them to take? What prevents them from immediately entering a space they have no context for? Who or what is responsible for their first experience? If those questions do not have clear, documented answers, the moderation infrastructure is not in place. The rules document is not a substitute. The moderation bot is not a substitute. A few active people with moderator roles is not a substitute. The architecture lives in the server structure: the channel layout, the role hierarchy, the access gates, the verification flow, and the support pathways. It is built before the first member joins, and it is adjusted as the community grows and the membership changes. The communities that handle moderation well did not get lucky with their audience. They made structural decisions before they had enough members to see the problems those decisions would prevent. That early work compounded. The members they retained shaped the culture that the next wave of members joined. And the next wave shaped the one after that. This is how community culture is actually built. Not through rules posted in a channel. Through the structure that was there before anyone had a reason to read them.

Daniel Jeong builds Discord infrastructure for startups and growth-stage companies. His work focuses on the systems that keep communities functional as they scale: onboarding, moderation architecture, support workflows, and engagement frameworks. https://danieljeong.org